How to Encode Long Text Using Large Language Models?

This blog explores methods for encoding long contexts using large language models, focusing on techniques for RAG and long document classification.

Why Long Context Is So Hard?

Over the past few years, large language models (LLMs) have made remarkable progress in extending context length limits. For example, BERT-based models typically support up to 512 tokens, while standard GPT-3 models can handle 2,048 tokens. GPT-4 offers two configurations: one with 8,192 tokens and another with an extended window of 32,768 tokens (32K tokens). Recently, Gemmi announced a 2 million-token context window for Gemini 1.5 Pro. However, using models with larger context windows does not necessarily lead to better performance. I hope this blog helps unravel some of the myths about long contexts and explains some current solutions to address this challenge.

The limitations of the context window stem from the inherent properties of the transformer architecture itself. Most current LLMs, such as GPT and LLama, rely on the transformer architecture and its self-attention mechanism. This mechanism compares each token in the input sequence with every other token, resulting in a quadratic increase in both memory usage and computational cost as the context length grows. To address the limitations imposed by context length, two main research directions have emerged. The first focuses on training models with larger context windows, aiming to extend these limits. Some research has explored fine-tuning LLMs with longer context inputs (

The second approach focuses on improving encoding techniques. Encoding all the information from a multi-page document into a single embedding vector is difficult, if not impossible. Even with a model that has a long context window capable of handling large inputs, encoding everything from multiple pages into one vector may result in the loss of important information. Particularly for retrieval tasks, if you need to extract specific information from a sentence, a large embedding for multi-page text might not effectively capture it. In such cases, chunking long texts into smaller segments while maintaining the dependencies between them offers a more effective approach.

This blog will focus on the second direction, discussing available techniques for encoding long contexts in two different tasks: one in building Retrieval-Augmented Generation (RAG) and the other in document classification.

Encoding Long Contexts in RAG

Building a RAG system requires integrating internal knowledge bases, which often involves encoding hundreds of pages of documents. Efficiently encoding these documents and facilitating retrieval and generation afterward remain core challenges. Here, I will explain two encoding techniques: naive chunking and late chunking.

Naive chunking

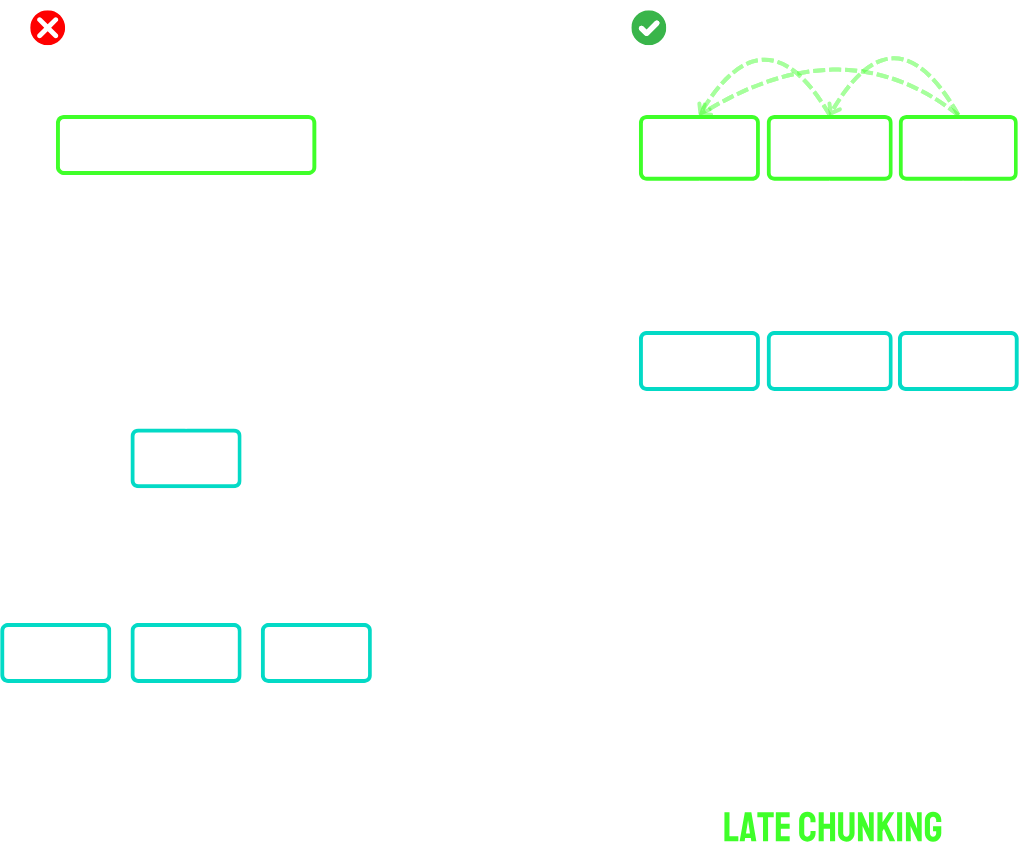

The naive encoding approach (shown on the left side of the image below) involves splitting the text into chunks beforehand and applying an embedding model to each chunk. A common method for generating a single embedding from each chunk is to use mean pooling on the token-level embeddings, where the embeddings of all tokens are averaged.

To split the text, we can use a fixed length (see an implementation in the OpenAI CookBook), though in some cases, splitting at paragraph or sentence boundaries may better preserve the text’s meaning. This approach has been implemented in Langchain via the function RecursiveCharacterTextSplitter (see this blog for more detailed explanations of this function.)

As a result, naive chunking allows encoding the entire sequence without cutting until the maximum context window is reached. However, because it encodes each chunk independently, it breaks the dependencies between chunks. This means that each chunk is treated as an independent element, without considering the context before or after it.

Late chunking

Late chunking, introduced by Jina AI

It is important to note that effective late chunking relies on embedding models with long-context capabilities. In their example, they use jina-embeddings-v2-base-en, which can handle up to 8,192 tokens—roughly the equivalent of ten standard pages of text. Recently, other embeddings have become available, such as voyage-3, which supports up to 32,000 tokens.

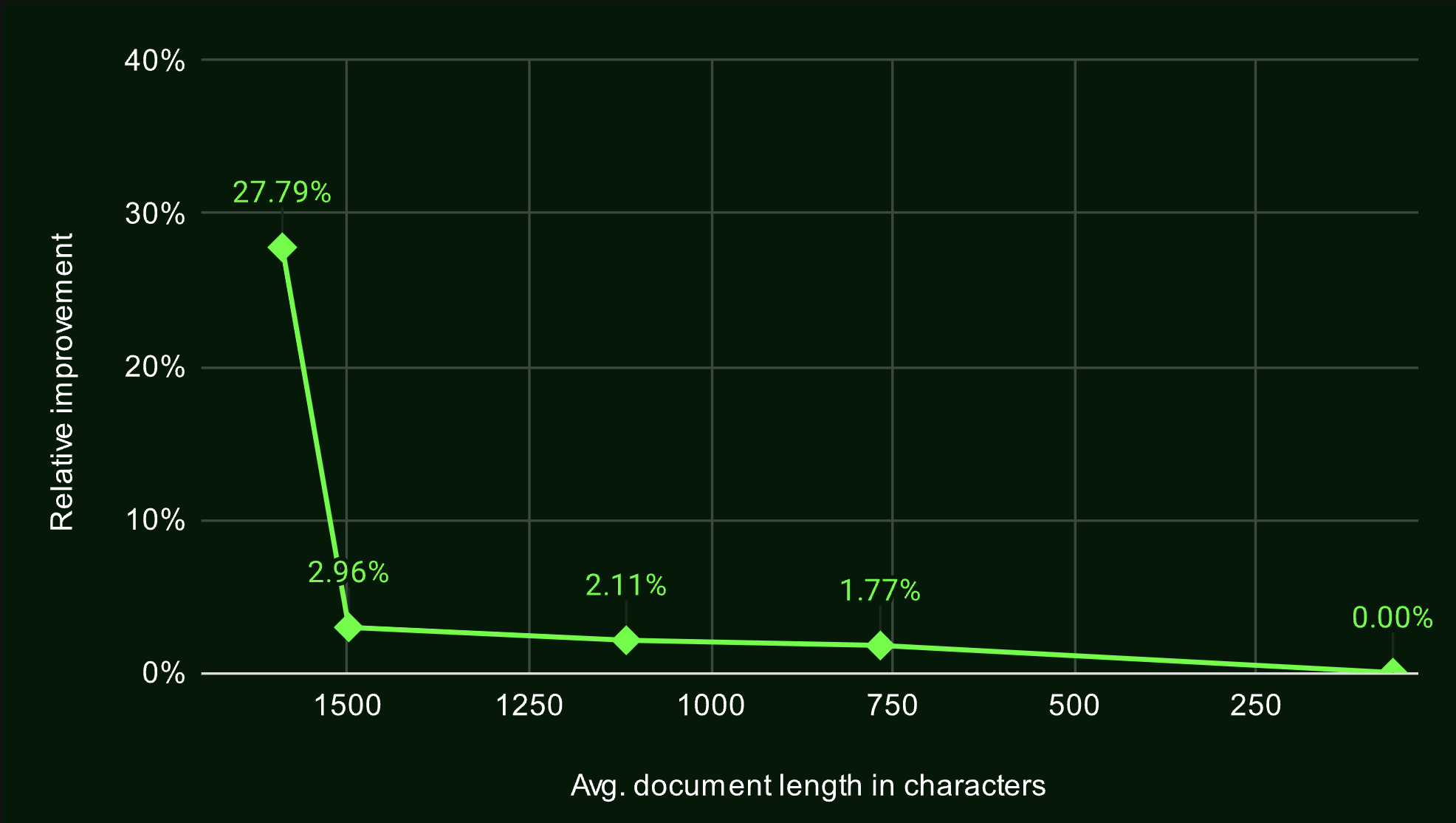

To evaluate the effectiveness of late chunking, they tested several retrieval tasks from the BeIR benchmark. In all cases, late chunking outperformed the naive approach, particularly for longer documents, where the performance gap between the two methods increased with document length. This demonstrates that late chunking becomes more effective as document length grows.

One last consideration about late chunking is that it requires an embedding of a large context window initially. However, because most of these models are not open-sourced, this still presents a barrier for many enterprises that rely on open-source models in adopting this approach.

Encoding Long Contexts in Document Classification

Unlike RAG, which relies on retrieving relevant pieces of information, classification tasks typically require fine-tuning a LLM for the downstream task. In such tasks, a [CLS] token is added at the beginning of the input sequence, and its final embedding, representing the entire sequence, is used for classification by adding a classifier layer on top. Fine-tuning is then used to adjust the weights of a pre-trained transformer model (like BERT) on task-specific labeled data, enabling it to make accurate predictions for that particular task.

A common challenge arises when the input text exceeds the context window of the LLM. To illustrate this, consider the EURLEX-57K dataset, a multi-label classification dataset based on European Union legal documents. The EURLEX-57K dataset contains 57,000 legislative documents, each with an average length of 727 words

| Input(s) | Output(s) / Label(s) |

|---|---|

| Text: Commission Regulation (EC) No 1156/2001 of 13 June 2001 fixing the export refunds on white sugar and raw sugar exported in its unaltered state. THE COMMISSION OF THE EUROPEAN COMMUNITIES Having regard to the Treaty establishing the European Community, Having regard to Council Regulation (EC) No 2038/1999 of 13 September 1999 on the common organisation of the markets in the sugar sector(1), as amended by Commission Regulation (EC) No 1527/2000(2), and in particular point (a) of the second subparagraph of Article 18(5) thereof, Whereas: (1) Article 18 of Regulation (EC) No 2038/1999 provides that the difference between quotations or prices on the world market for the products listed in Article 1(1)(a) of that Regulation and prices for those products within the Community may be covered by an export refund. (2) Regulation (EC) No 2038/1999 provides that when refunds on white and raw sugar, undenatured and exported in its unaltered state, are being fixed account must be taken of the situation on the Community and world markets in sugar and in particular of the price and cost factors […] | 28 (Trade Policy) 93 (Beverages and Sugar) 94 (Foodstuff) |

Several approaches are available to bypass BERT’s maximum text length limit

-

Document Truncation: The simplest approach involves fine-tuning BERT by truncating long documents to the first 512 tokens (this can be done by setting

truncation=Truein the tokenizer function). - Longformer

: It is designed to process longer input sequences using an efficient self-attention mechanism that scales linearly with the input length. Unlike BERT, which can handle up to 512 tokens, Longformer can process up to 4,096 tokens. - Hierarchical Encoding

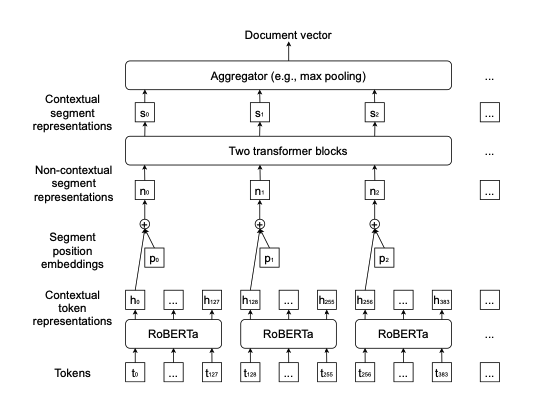

: A document is first split into segments, each of which should have less than 512 tokens. These segments can be independently encoded using any pre-trained Transformer-based encoders (e.g., RoBERTa in the Figure below). We sum the contextual representation of the first token from each segment up with segment position embeddings as the segment representation. Then the segment encoder—two transformer blocks are used to capture the interaction between segments and out- put a list of contextual segment representations, which are finally aggregated into a document representation, normally using max-pooling. You can find a detailed example of the implementation in this GitHub folder.

In the literature, different segmentation methods have been tested, including segmenting based on document structure, as in

As mentioned at the begining, the context limitation arises from the transformer architecture. Some research started on focusing on developing new architectures, such as the State Space Model (SSM). However, there is still limited understanding of how these models can improve tasks like RAG or classification.